What is Docker? Docker Container: A Deep Dive into Docker

Docker and Kubernetes Course

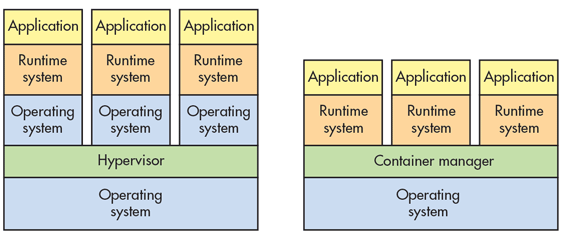

Nowadays, Docker has become much popular in today's fast-growing IT industry. Many organizations are regularly implementing it in their production or development environment. So, before going to the discussion about the Docker Container, first, it is better to understand the containerization. So when containerization is not come into the IT industry, at the time, the main process to implement and organize the application with their dependencies was to install every application in its virtual machine. These virtual machines can run multiple applications with the help of the same physical hardware and normally, we can call this process as Virtualization. But this process always had some disadvantages like Virtual machines were bulk in size, running so many virtual machines leads us to unstable performance, the boot-up process is time-consuming. And also, virtual machines can not able to solve problems like portability, software upgrades, or CI/CD implementations.

To solve this issue, technical experts recommended using the concept of Containerization. Containerization is one type of virtualization to the operating system level. In this way, virtualization comes with abstraction to the hardware. Some basic benefits of using containerization are –

- Containers normally do not have any guest operating system. It always uses the host’s operating system. So in this way, they share the relevant libraries and resources when required.

- The processing and execution of applications are normally very fast because application-related dependency environments along with libraries of containers always run on the host kernel.

- Booting up a container always takes a fraction of a second since containers are much lightweight and faster by performance compared to virtual machines.

About Containers

Containerization is is an approach or process in software development through which we can maintain an application or service along with its dependencies and configuration as a packaged together and treat that package as a container image. So in this way, we can test this container-based application as a whole complete package and also can deploy this image of the container instance to the target operating system. Software container images always act as a standard single unit of software deployment which always contains different code and dependencies. In this way, the containerization process helps the developers and IT professionals to deploy any containerizing software across environments with minimum or no alteration.

Through containers, we can isolate the application from each other in a shared environment. So, in this way, containers become a much smaller footprint compared to the virtual machine images. Another benefit of containerization is scalability. We can create new containers for any short term operations. In this way, we can easily scale out the container. Also, in a real environment, we always want to run each container or image in a different environment. So, in general, containers always provide many benefits likes portability, isolation, scalability, control within the entire application lifecycle workflow, and agility,. Out of these benefits, isolation between the development and operations environment is one of the most important.

Overview of Docker?

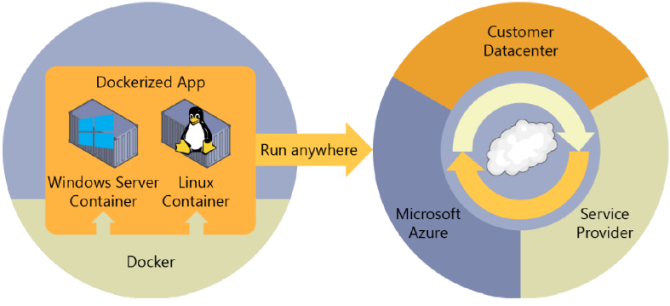

In simple words, it can be defined as “Docker is an open-source project which is mainly used for deployment automation purpose”. Using this project, we can deploy any applications as a portable, self-sufficient container image that can be run on any cloud or on-premises server environment. There is a company called Docker which mainly promotes and experiments on this technology. Docker containers can be run anywhere – either in an on-premises customer data center or in any cloud environment like Azure. Docker containers can also be hosted in the Linux environment. Docker Windows container images can only be run in the windows host server whereas Linux images can be run in both the Linux host and Windows hosts with the help of Hyper-V Linux VM.

So, to host the containers in the development environment, Docker provides Docker Community Edition (CE) for Windows or macOS. This product installs the necessary Docker host or VMs so that it can host the containers. Also, Docker provides Docker Enterprise Edition (EE) for the enterprise deployment to build, ship, and run large business-oriented applications in the production environment.

If we want to run the docker container in any Windows Operating system based computer, then we can use any one of the below mentioned two run time containers -

Windows Server Containers

This type of container mainly support the isolation of any application. For this purpose, it mainly depends on technology like process isolation and namespace isolation. The kernel is shared in this container.

Hyper – V Containers

This type of container mainly provides isolation by running each container in a very highly optimized virtual Host environment. The Kernel is not sharable in this container.

Docker Terminology

In this section, we will discuss the different terms and definitions related to the Docker container concept.

- Container Image – Container image is a package. This package file contains information related to the application dependency. It also contains the information regarding the steps of container creation. This image contains all the dependencies (such as framework, environments) including deployment and execution configuration which need to be used by the container at the runtime.

- Docker File – Docker file may be a document that contains the all necessary directions for making ready and building the dock-walloper image. it's one form of batch script move into that the primary line states the bottom image, to start with, and so follow the directions to put in the desired programs, copy files, so on till the complete set isn't prepared.

- Build – Build is associate degree action that won't build an instrumentation image supported info and context provided by the dockhand file.

- Container – Container or Docker Image is both same. A container always describes the process of execution of an application. It normally builds with a Docker Image, a standard set of instructions, and an execution environment.

- Volumes – Volumes offer a writable filesystem that can be used by the container. Containers are read-only. But if we can write into the filesystem with the help of programs. So, for this reason, volumes always include writable space on the container image in such a way that the program can have the access to a writable filesystem. Volumes always exist in the target system which is mainly organized by Docker.

- Tag – Tag is used for marking or labeling which can be applied to the images so that we can differentiate the images or versions of the same type of image.

- Multi-stage Build – It is the new feature introduced since Docker 17.05 or higher version. It helps us to reduce the size of the images.

- Repository (repo) – Repository represents a group of the same type of Docker images. These types of already have a label as a tag for indicating the version of the image.

- Registry – It is a service that normally permits us so that we can able to access or navigate the repositories. Docker Hub is the default registry for public images. A registry can contain multiple repositories from different project teams.

- Docker Hub – Docker Hub is a registry collection. In this registry, we can store images and then can use those images. Docker Hub contains both Docker image for public or private registries. With the help of this, we can build triggers, web-hooks, and also can integrate GitHub and BitBucket.

- Docker Container Registry – If we want to work with Docker in the Azure, the main public resource is named as Docker Container Registry. It unremarkably provides a written account that closes to our preparation in Azure and it offers the management over access, creating it doable to use our Azure Active Directory cluster and permissions.

Docker Containers, images, and registries

During the usage of Docker, we will produce AN application or service, and so we will package that application or service alongside its dependencies as a container image. So, the container image could be a static illustration of the appliance or service as well as its configuration and dependencies. Containers are initially tested within the development atmosphere or server. We also can store the image in a registry which can act as a library of images. Because we also need a registry when deploying to the production orchestrators. Docker always maintains a public registry via a Docker Hub. Other provide like Microsoft provides different registry collection like Azure Container Registry. This registry is just like a storage area where we can store images and those available images can be pulled for building containers to run the application or services.

Docker has mainly maintained the Docker Hub which is public. So, along with the Docker Trusted Registry and enterprise-grade solution, Azure always offers the Azure Container Registry. Nowadays, every service provider vendor like Microsoft, AWS, Google, etc also provides the container registry. We then can provide version and deploy the images in multiple environments and thus we can provide a consistent deployment unit.

Summary

So, Containerization is one of the most powerful processor techniques for software implementation in today's IT industry. In this article, we discuss the basic concept of the Containerization along with the concept and overview of Docker and its terminology.

Take our free docker skill challenge to evaluate your skill

In less than 5 minutes, with our skill challenge, you can identify your knowledge gaps and strengths in a given skill.